Hey guys, So here comes the second blog of the Handwritten notes series which we started last time. We will be talking about Pandas in this blog. I have uploaded my handwritten notes below and tried to explain them in the shortest and best way possible.

The first blog of this series was NumPy Handwritten Notes. If you haven’t seen those yet, go check out NUMPY LAST-MINUTE NOTES.

Let’s go through the Pandas notes…

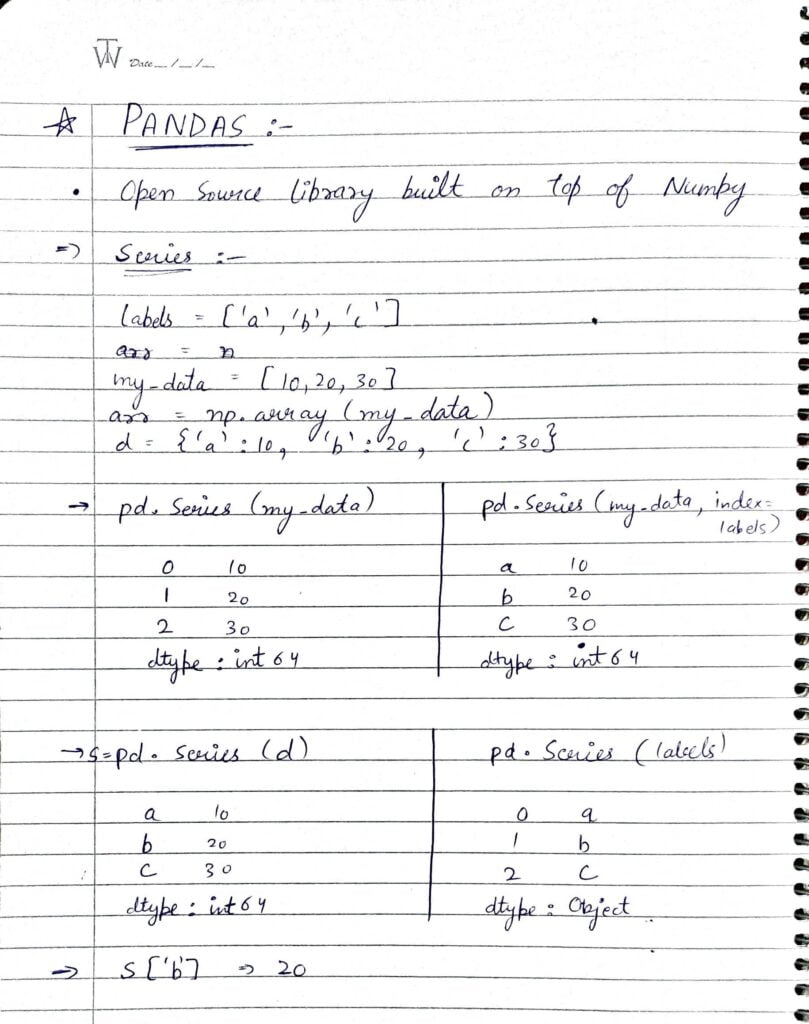

- Pandas is built on NumPy.

- Pandas Series is a one-dimensional labeled array capable of holding data of any type (integer, string, float, python objects, etc.)

- We use pd.Series to create a Pandas Series object.

- In the image above I have shown 4 different ways of creating Pandas Series.

- In the first way I have just passed on a list. Thats why it has taken default indices as 0,1,2,etc.

- In the second way I have passed on data list with a index list also.

- In the third way we have created a Series from a dictionary.

- In the fourth way, we have done exactly similar operation as the first way with just different list.

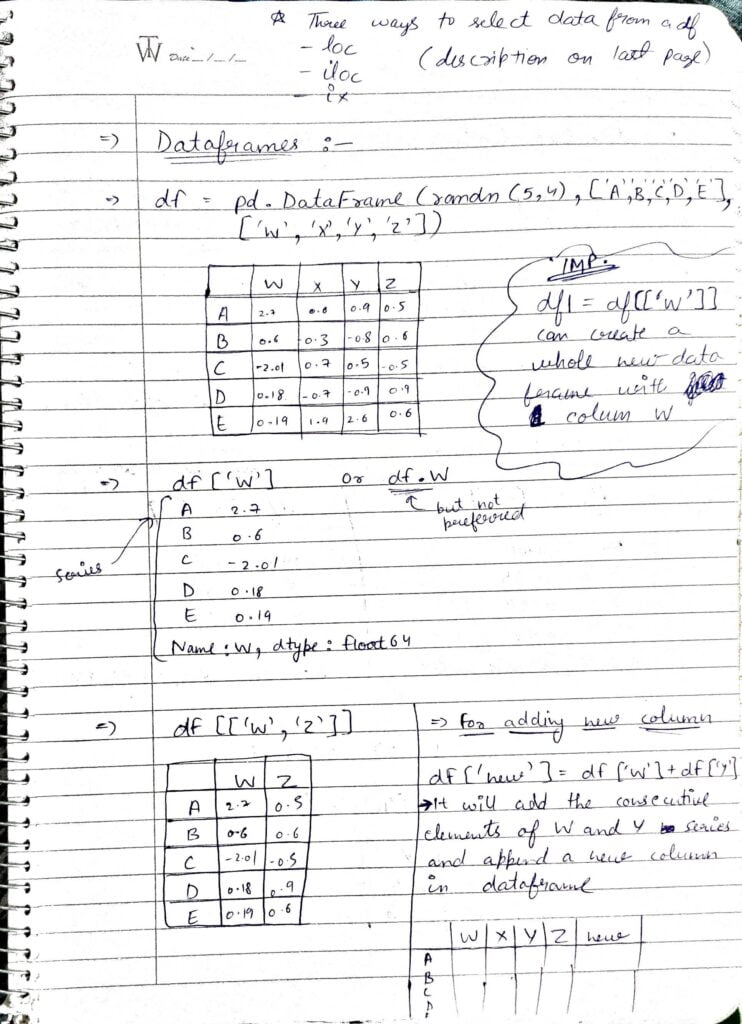

- Dataframe is two-dimensional, size-mutable, potentially heterogeneous tabular data. The data structure also contains labeled axes (rows and columns). Arithmetic operations align on both row and column labels. Can be thought of as a dict-like container for Series objects. The primary pandas data structure.

- Making a dataframe with data as some random data, columns as W, X, Y, Z, and rows as A, B, C, D, E.

- We can extract columns out of the dataframe using df[‘W’] or df.W but the second one is not preferred. Both of these will return columns as Series objects.

- Also, we can make a dataframe out of the current dataframe with lesser columns by doing something like df[[‘W’,’Z’]].

- We can also add new columns in the current dataframe by doing df[‘name of new column’] = some list or series.

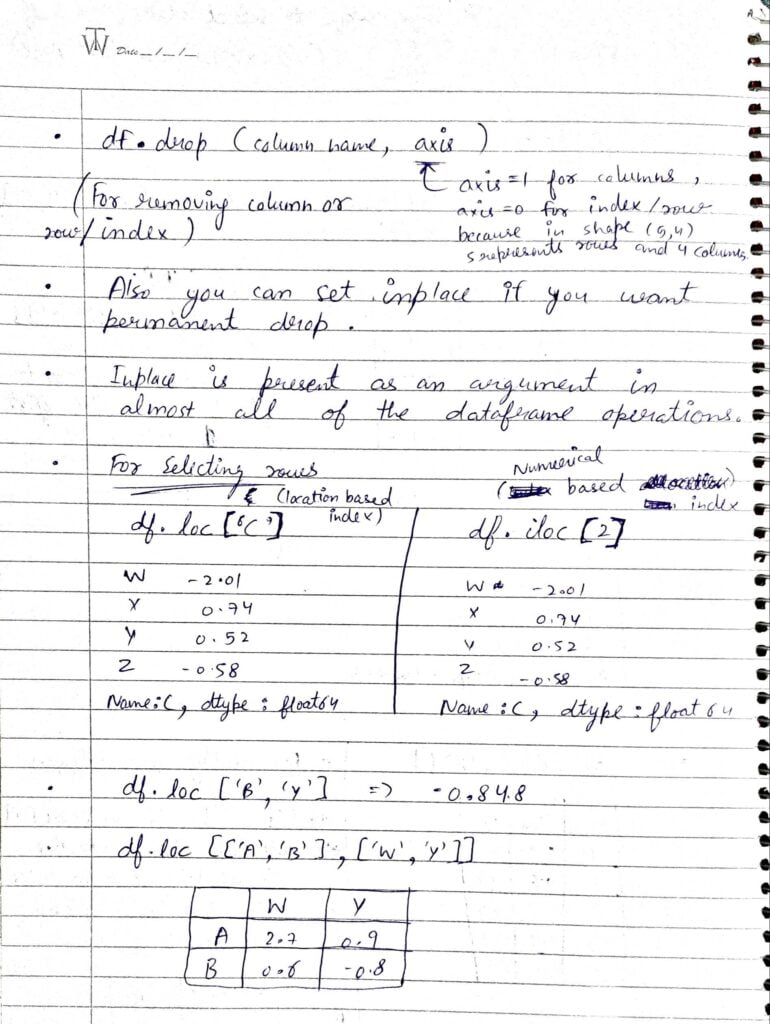

- df.drop() is used to drop rows or columns. To drop rows just use df.drop(row index, axis=0). To drop column use df.drop(column name, axis=1).

- We can also set inplace=True if we want the drop operation in our original dataframe also.

- df.loc() and df.iloc() both are used for selecting rows or we can say locating rows. The iloc method uses the index of the row and loc uses the name of the index.

- df.loc[[‘A’,’B’],[‘W’,’Y’]] is also a method used for extracting custom dataframes from our main dataframe.

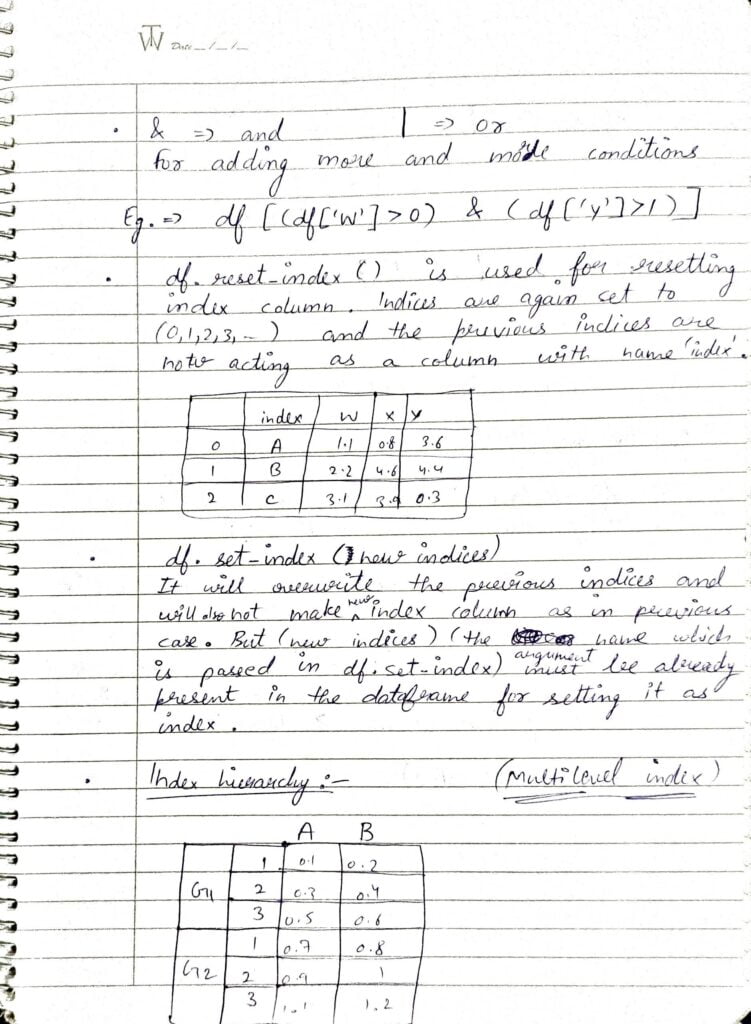

- & represents AND operator and | represents OR operator. These are used when we want to add multiple conditions.

- df.reset_index() is used to reset indices of the dataframes. Now the old index column also gets added to the dataframe with column name as ‘index‘.

- This resetting operation is generally required when we drop nulls then many of the rows with null data gets dropped and indices do not remain sequential.

- df.set_index() is used to set values of our own choice as new indices for our dataframe.

- Index hierarchy is shown in the last point.

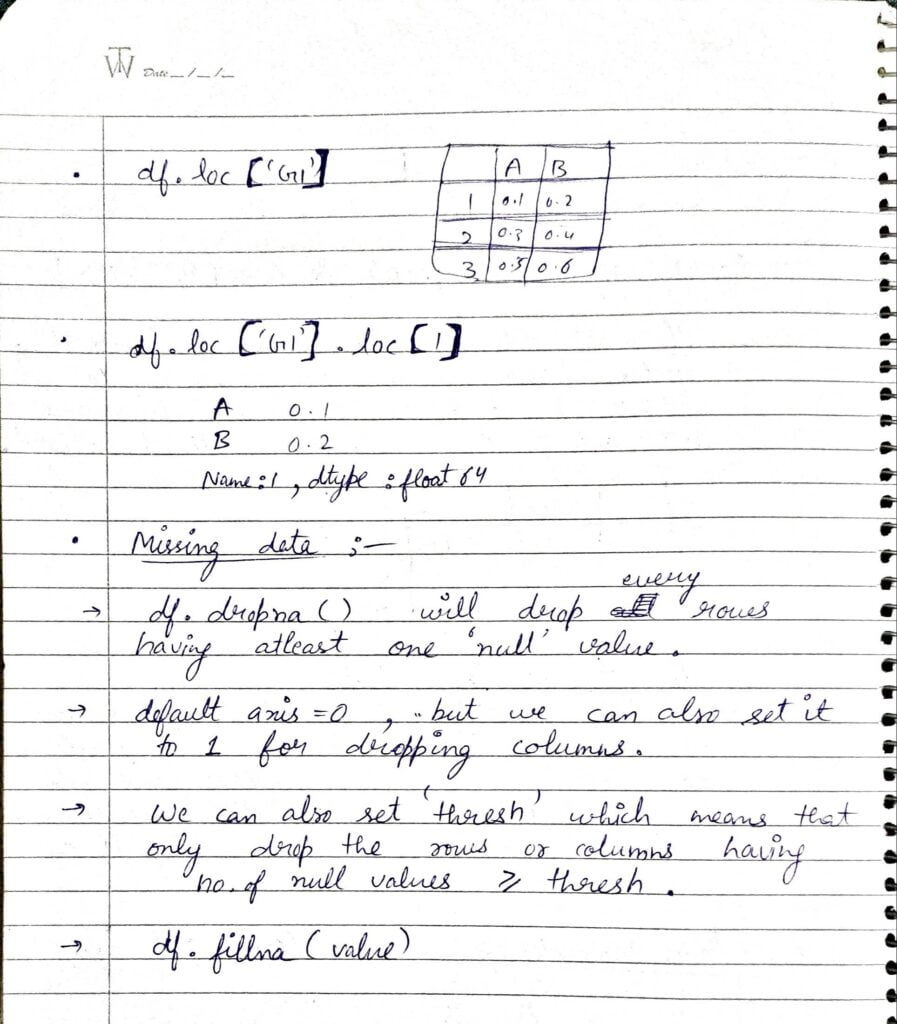

- df.loc[‘G1’] will select rows will index as G1. Here G1 and G2 are first level indices and 1, 2, 3 are second level indices.

- df.loc[‘G1’].loc[1] will give the Series object as shown above.

- df.dropna() will drop every row having even 1 NULL element.

- Here we are dropping rows with this df.dropna() because axis=0 by default, if we set it to 1 we can also drop columns.

- We can also set thresh value ,means if we set thresh as 5, then it will only drop rows if the no. of NULLs in that row is greater than equal to 5.

- We can also fill NULL positions with some values. Most of the times it is the mean of the column.

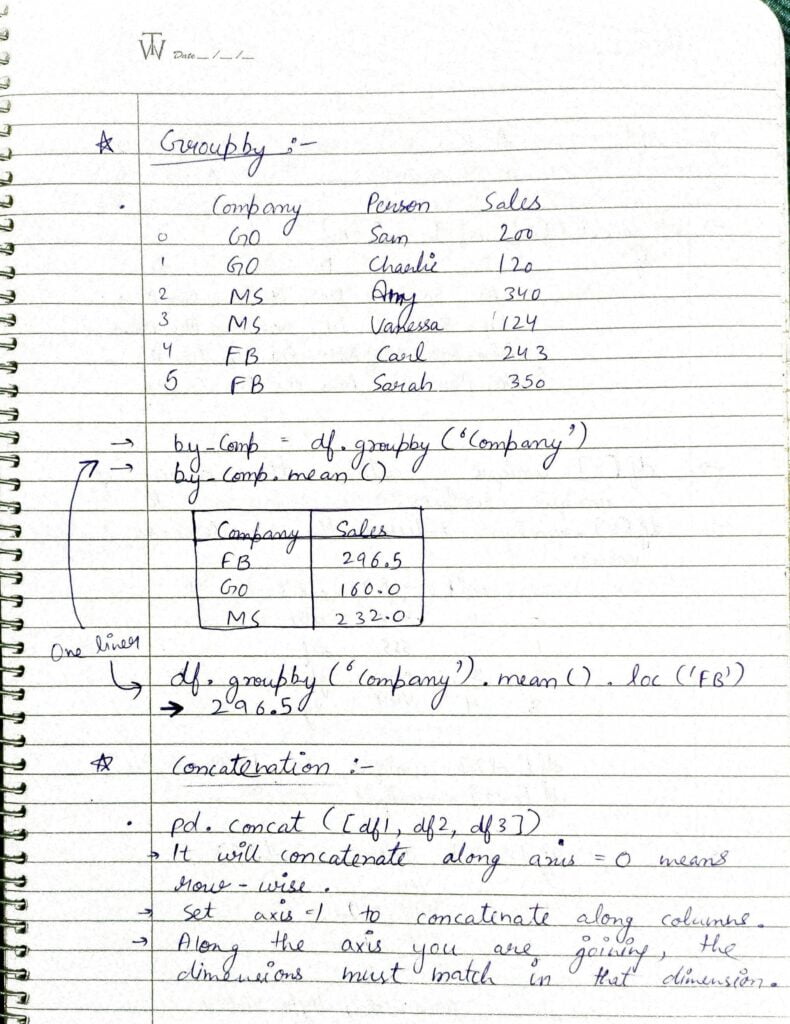

- df.groupby() is a very special operation in pandas. Using the Groupby function we can group rows based on some values.

- Like in this example we are grouping by ‘Company‘ column. Then we are taking the mean of the resulting dataframe. Person column will get dropped automatically while mean operation.

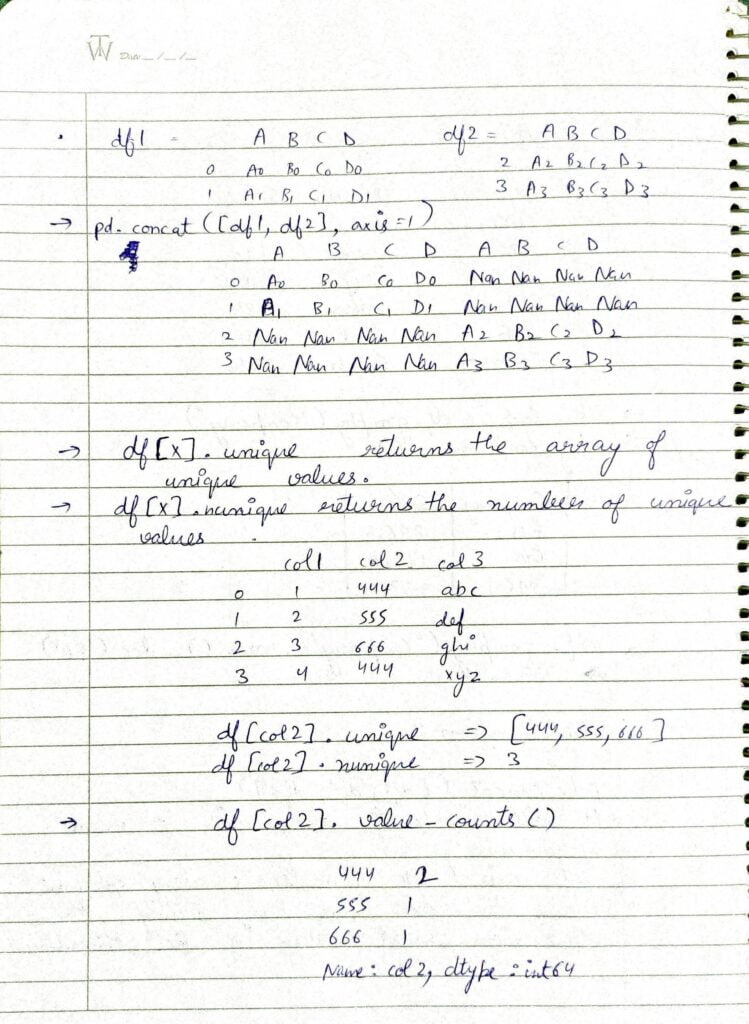

- pd.concat() is also a very useful operation in pandas using which we can join 2 or more dataframes to form one final dataframe. By default, it will concatenate row-wise, if you want to do it column-wise, pass axis=1 as a parameter.

- As we can see in the first example that we joined column-wise but due to the indices mismatch, those null values occurred.

- df[‘column name’].unique() return the array of all the unique values in that column.

- df[‘column name’].nunique() return the number of unique values in that column.

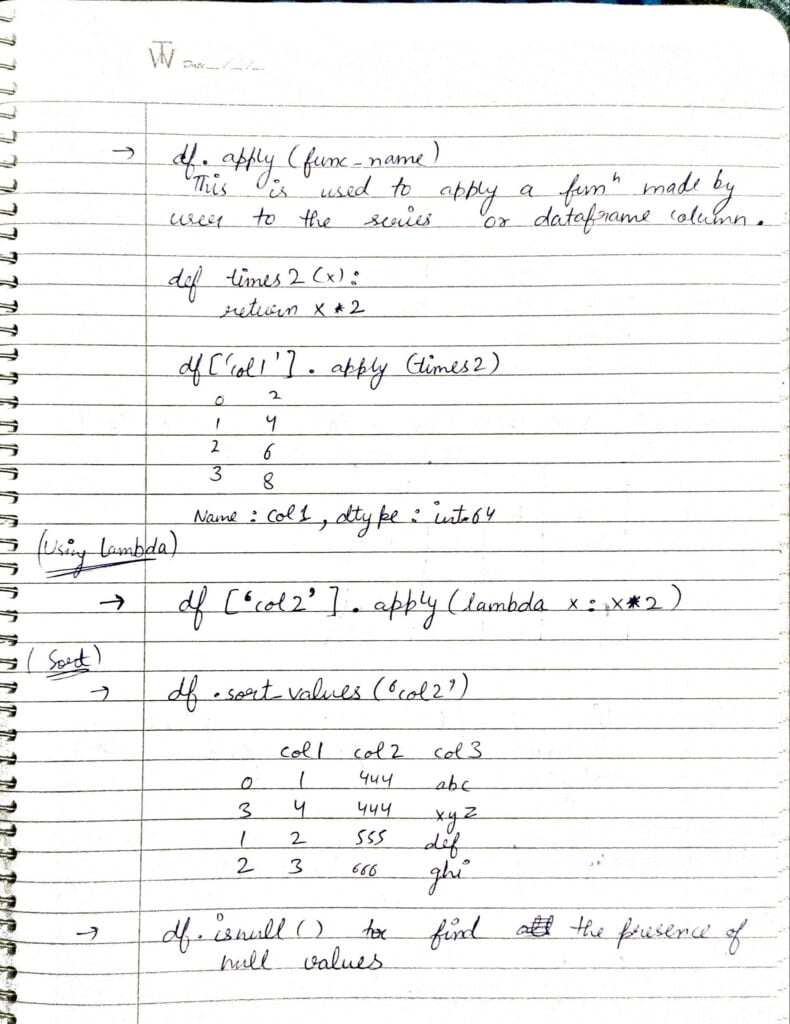

- df.apply() will apply a function on all the values of that column or Series or Dataframe.

- df.sort_values(‘column name’) will sort the dataframe according to that column.

- df.isnull() is used to find the presence of NULL values.

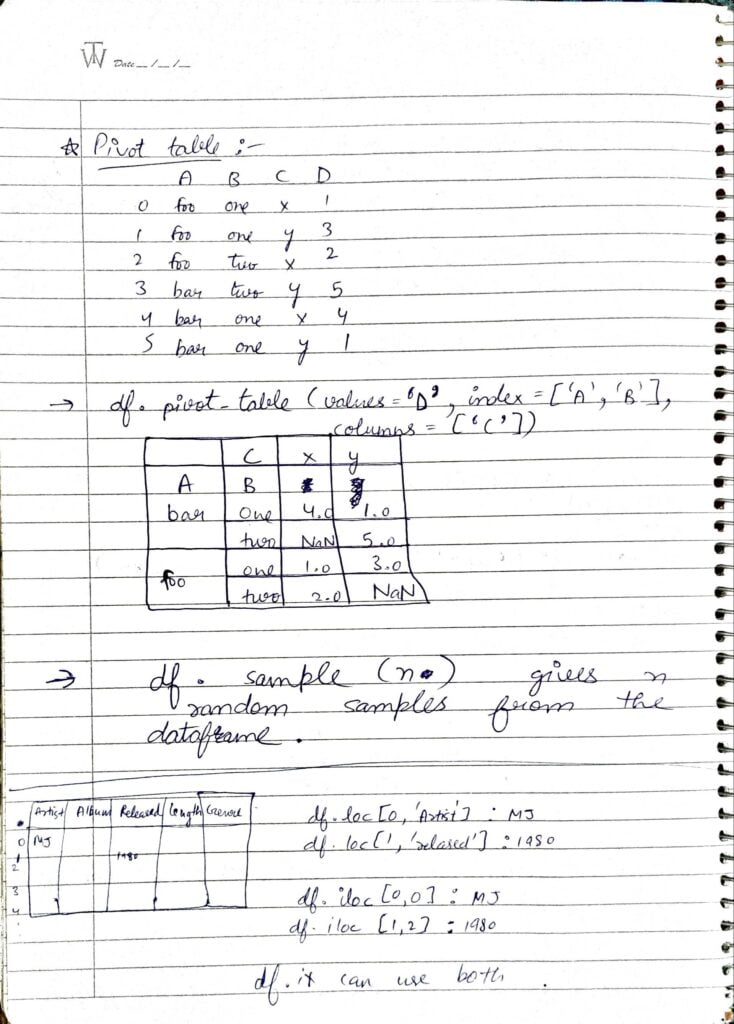

- df.pivot_table(values, index, column) will create table like shown above. Pivot tables prove to be highly useful in Data Science use cases.

- df.sample(n) will give n random samples from the dataframe.

Do let me know if there’s any query regarding Numpy by contacting me on email or LinkedIn.

So this is all for this blog folks, thanks for reading it and I hope you are taking something with you after reading this and till the next time ?…

Read my previous blog: NUMPY – LAST-MINUTE NOTES – HANDWRITTEN NOTES

Check out my other machine learning projects, deep learning projects, computer vision projects, NLP projects, Flask projects at machinelearningprojects.net.