In today’s blog, we will see how we can perform stock sentiment analysis using the headlines of a newspaper. We will predict if the stock market will go up or down. This is a simple but very interesting project due to its prediction power, so without any further due, Let’s do it…

Step 1 – Importing libraries required for Stock Sentiment Analysis.

import pandas as pd import pickle import joblib from sklearn.ensemble import RandomForestClassifier from sklearn.feature_extraction.text import CountVectorizer from sklearn.metrics import confusion_matrix,accuracy_score

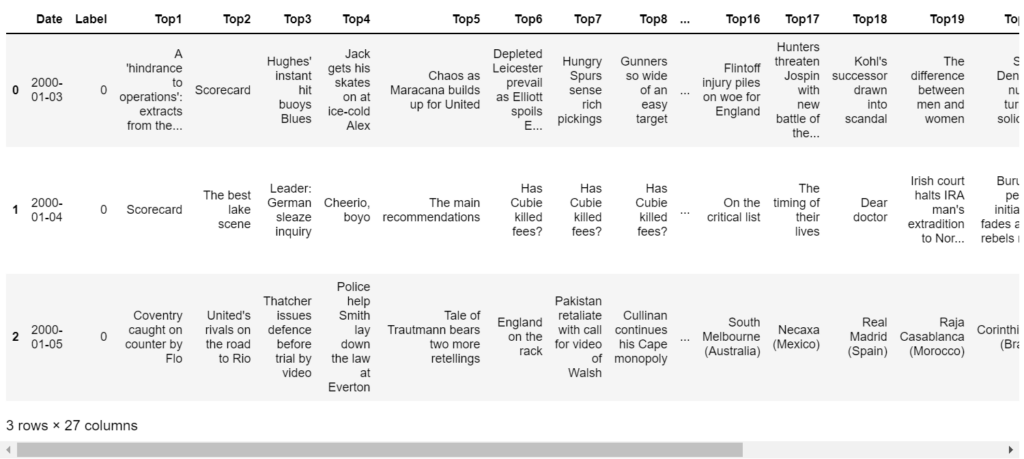

Step 2 – Reading input data.

df = pd.read_csv('data/Data.csv')

df.head(3)

Step 3 – Cleaning our data.

headlines = []

cleaned_df = df.copy()

cleaned_df.replace('[^a-zA-Z]', ' ',regex=True,inplace=True)

cleaned_df.replace('[ ]+', ' ',regex=True,inplace=True)

for row in range(len(df)):

headlines.append(' '.join(str(x) for x in cleaned_df.iloc[row,2:]).lower())

- We are using regex here to replace everything that’s not a-z or A-Z by a space.

- Then we are just removing the extra spaces using regex.

- Finally, we are converting everything to lowercase, joining every headline from column 2 to 27 (because column 0 is a timestamp and column 1 is a label) and appending it to our headlines array.

Step 4 – Initializing CountVectorizer.

cv = CountVectorizer(ngram_range=(2,2)) cv.fit(headlines)

- Simply initializing CountVectorizer to convert headlines to a bag of words(vectors).

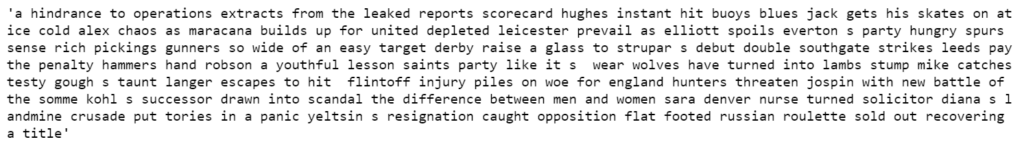

Step 5 – Checking our data.

headlines[0]

Step 6 – Splitting data.

train_data = cleaned_df[df['Date']<'20150101'] test_data = cleaned_df[df['Date']>'20141231'] train_data_len = len(train_data) train_headlines = cv.transform(headlines[:train_data_len]) test_headlines = cv.transform(headlines[train_data_len:])

- Splitting our data based on timestamp.

- train data is all before 1 Jan 2015 (excluding it).

- test data is all after 31 December 2014 (starting from 1 Jan 2015).

- Then simply transform headlines using CountVectorizer we initialized above.

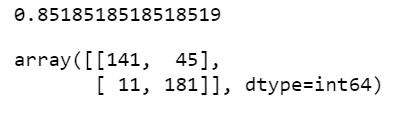

Step 7 – Training our model.

rfc = RandomForestClassifier(n_estimators=200,criterion='entropy') rfc.fit(train_headlines,train_data['Label']) preds = rfc.predict(test_headlines) print(accuracy_score(test_data['Label'],preds)) confusion_matrix(test_data['Label'],preds)

- Here we are using RandomForestClassifier to train our model.

- Fitting train data.

- Taking predictions.

- Printing its performance.

Step 8 – Saving our model.

joblib.dump(rfc, 'stock_sentiment.pkl')

- Simply using joblib to save our trained model.

Download Source code and Data for Stock Sentiment Analysis…

Do let me know if there’s any query regarding Stock Sentiment Analysis by contacting me by email or LinkedIn.

So this is all for this blog folks, thanks for reading it and I hope you are taking something with you after reading this and till the next time ?…

Read my previous post: Movie Recommendation System in 2 ways

Check out my other machine learning projects, deep learning projects, computer vision projects, NLP projects, Flask projects at machinelearningprojects.net.