Hey guys, So here comes the eighth blog of the Handwritten notes series which we started. We will be talking about K-Nearest Neighbors (KNN) in this blog. I have uploaded my handwritten notes below and tried to explain them in the shortest and best way possible.

The first blog of this series was NumPy Handwritten Notes and the second was Pandas Handwritten Notes. If you haven’t seen those yet, go check them out.

Let’s go through the K-Nearest Neighbors notes…

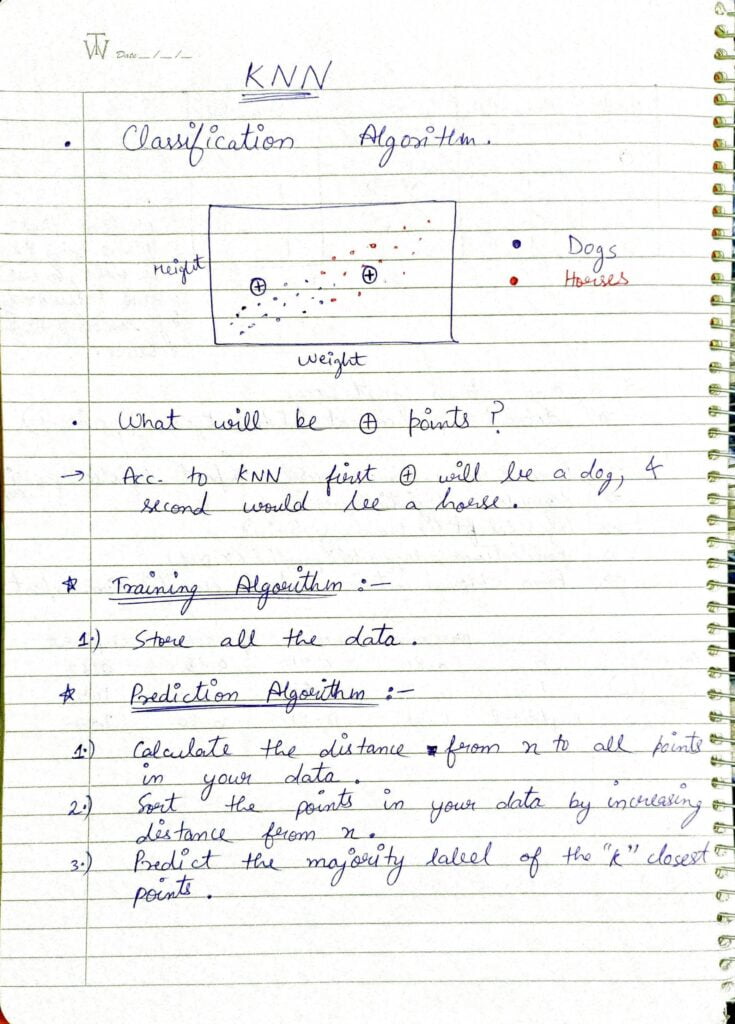

- In the first diagram, I have plotted dog and horse classes based on weight and height.

- Given this plot, if asked to predict the class of + points, would you be able to classify them correctly? And if yes, what approach will you use to do so?

- The most naive approach to do so will be to see some of the nearest points and make a prediction based on that.

- This is called the K-Nearest Neighbors algorithm. Based on the K-nearest data points, we predict the class of the data point under test.

- In the training phase, KNN does not make any type of discriminator function or anything else. It just stores all the data points.

- In the testing phase, it just calculates the euclidean distance of all points from the test point and sorts them in increasing order. Then it takes the first k-nearest points and sees which class is the majority class. And this majority class is allotted to the test point.

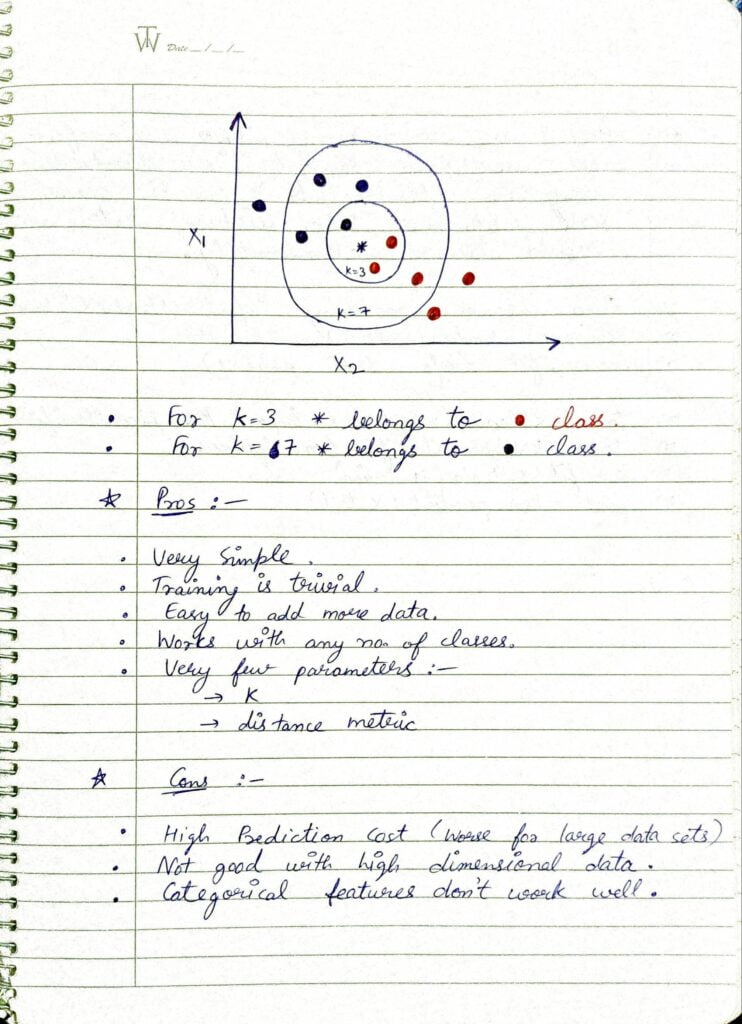

- This diagram shows how the KNN classification algorithm works.

- For different k’s, it will give different answers.

- Pros:

- Very Simple.

- Training is trivial.

- Easy to add more data.

- Works with any no. of classes.

- Very few parameters (k and distance metric).

- Cons:

- Worse for large datasets.

- Not good for high-dimensional data.

- Categorical features don’t work well.

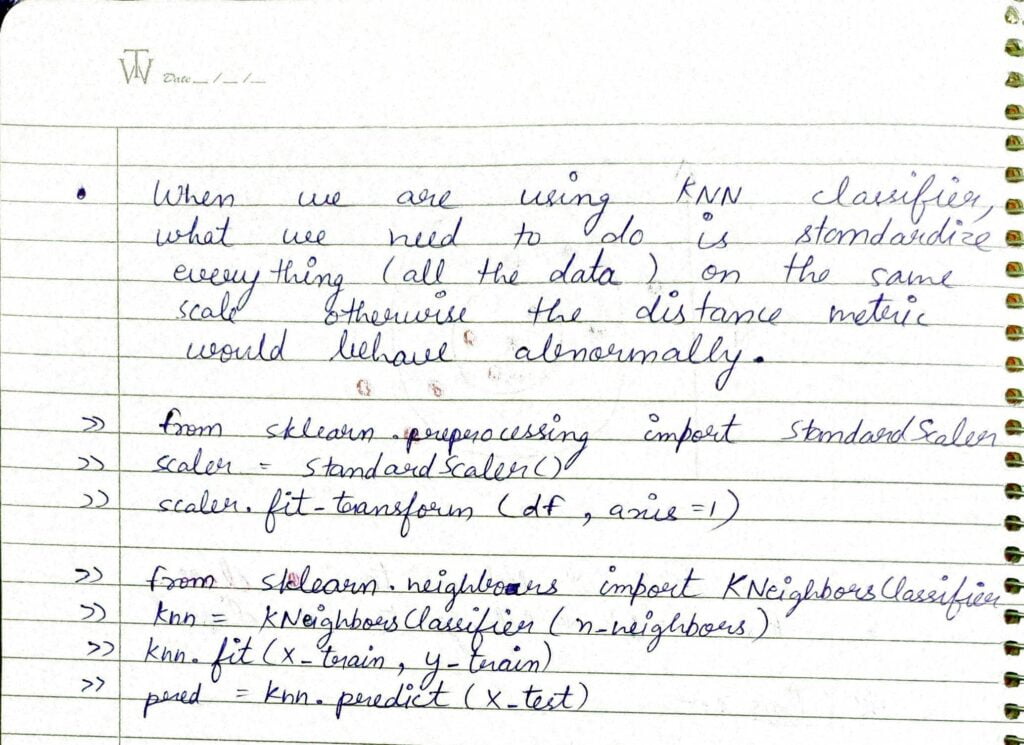

- One thing to note while using KNN is that it is a distance-based algorithm and it expects everything to be on the same scale.

- That’s why we need to use Standard Scaler or Min-Max Normalization in this case.

Do let me know if there’s any query regarding K-Nearest Neighbors (KNN) by contacting me on email or LinkedIn.

So this is all for this blog folks, thanks for reading it and I hope you are taking something with you after reading this and till the next time ?…

READ MY PREVIOUS BLOG: LOGISTIC REGRESSION – LAST-MINUTE NOTES

Check out my other machine learning projects, deep learning projects, computer vision projects, NLP projects, Flask projects at machinelearningprojects.net.