Hey guys, So here comes the eleventh blog of the Handwritten notes series which we started. We will be talking about Principal Component Analysis in this blog. I have uploaded my handwritten notes below and tried to explain them in the shortest and best way possible.

The first blog of this series was NumPy Handwritten Notes and the second was Pandas Handwritten Notes. If you haven’t seen those yet, go check them out.

Let’s go through the Principal Component Analysis notes…

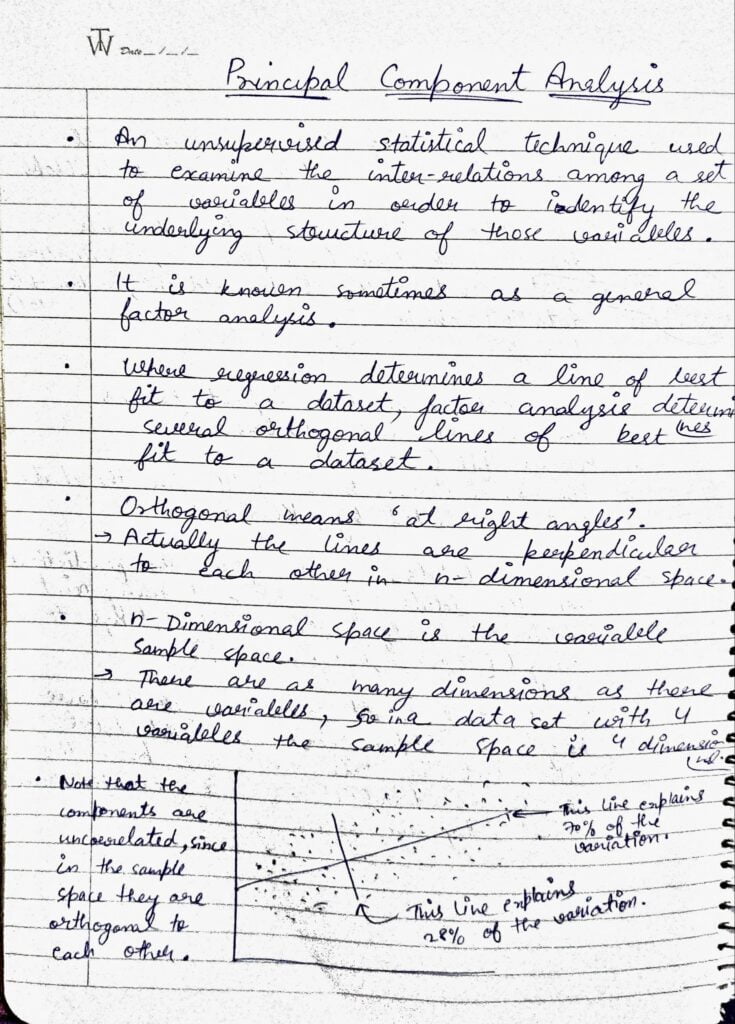

- PCA is an unsupervised statistical technique used to examine the inter-relations among a set of variables in order to identify the underlying structure of those variables.

- It is sometimes also known as General Factor Analysis.

- Where regression determines a line of best fit to a dataset, factor analysis determines several orthogonal lines of best fit to a dataset.

- Orthogonal means ‘at right angle’. Actually, the lines are perpendicular to each other in n-dimensional space.

- n-dimensional space is the variable sample space.

- There are as many dimensions as there are variables, so in a dataset, with 4 variables the sample space is 4 dimensional.

- Note that the components are uncorrelated, as they are orthogonal to each other.

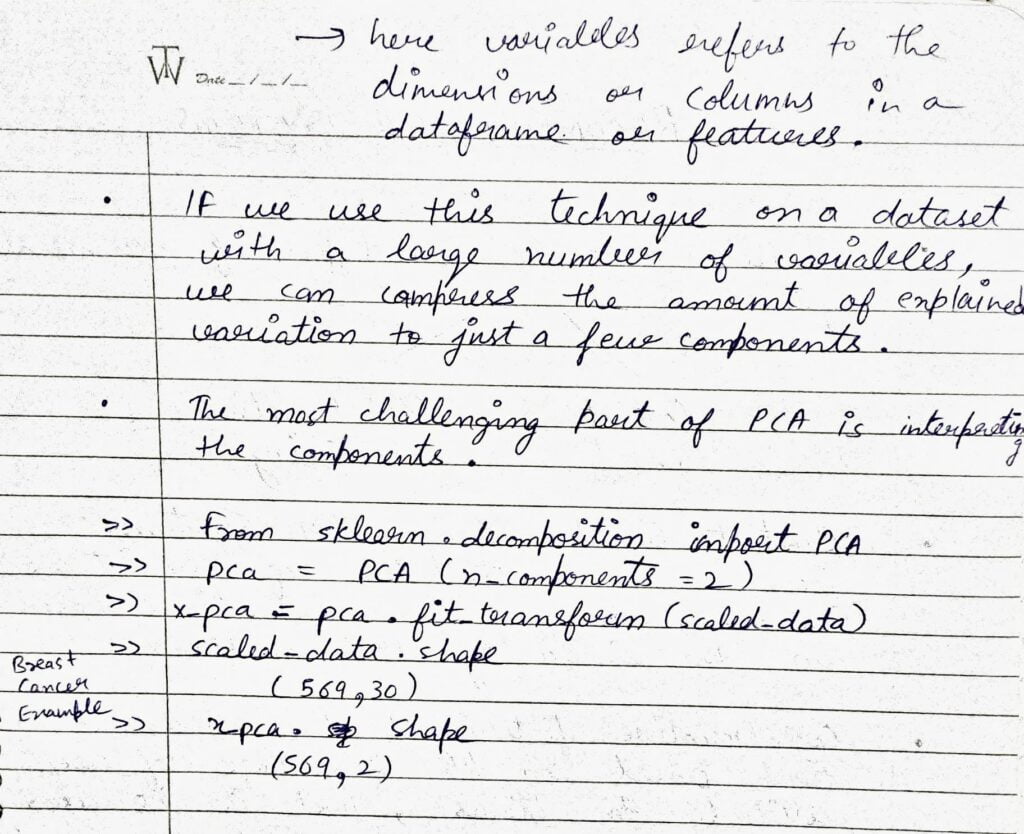

- If we use this technique on a dataset with a large number of variables, we can compress the amount of explained variation to just a few components.

- The most challenging part of PCA is interpreting the components.

- We can use sklearn.decomposition.PCA to implement it in Python.

Do let me know if there’s any query regarding Principal Component Analysis by contacting me on email or LinkedIn.

So this is all for this blog folks, thanks for reading it and I hope you are taking something with you after reading this and till the next time ?…

READ MY PREVIOUS BLOG: KMEANS – LAST-MINUTE NOTES

Check out my other machine learning projects, deep learning projects, computer vision projects, NLP projects, Flask projects at machinelearningprojects.net.