Hey guys, So here comes the tenth blog of the Handwritten notes series which we started. We will be talking about KMeans in this blog. I have uploaded my handwritten notes below and tried to explain them in the shortest and best way possible.

The first blog of this series was NumPy Handwritten Notes and the second was Pandas Handwritten Notes. If you haven’t seen those yet, go check them out.

Let’s go through the KMeans notes…

- KMeans is an unsupervised learning clustering algorithm that attempts to group similar data together in clusters.

- It is widely used in:

- Clustering similar documents.

- Clustering customers based on features

- Market Segmentation

- Identifying similar physical groups

- Working of KMeans:

- Step 1 – Choose k.

- Step 2 – Then randomly assign k cluster centers.

- Step 3 – Now assign each data point to a cluster center based on the least distance. In this way, the initial guess of clusters is performed.

- Step 4 – We will now find the cluster centroids of these clusters and these centroids will be the new cluster centers.

- Step 5 – Now we will update the clusters as we did in Step 3 and Step 4 and in this way, we will keep on updating the clusters till the clusters stop changing.

- There is no easy way of choosing the best “k” value. One way which gives satisfactory results is the Elbow method.

- First of all, it computes the Sum of Squared Errors for some k values like 2,4,6,8, etc.

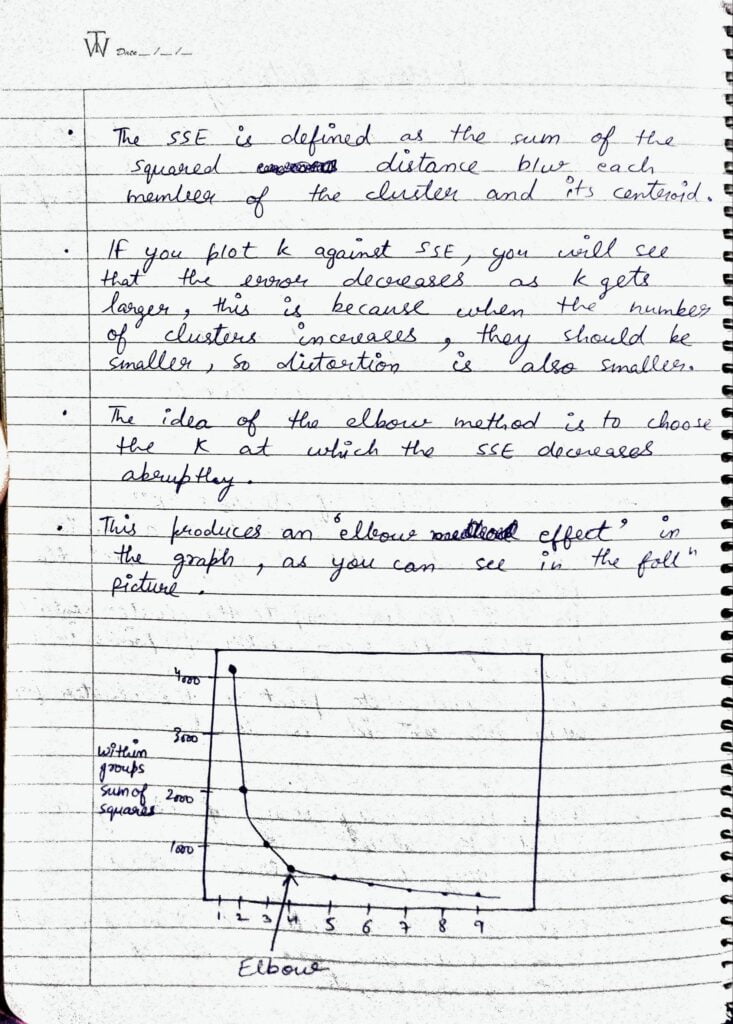

- SSE is defined as the square of the distance between each member of the cluster and cluster centroids.

- If you plot k against SSE, you will see the error decreases as k increases. This is because when the number of clusters increases, cluster size decreases, and hence the distortion is also less.

- The idea of the “k” method is to choose the “k” where SSE decreases abruptly.

- This produces an elbow effect in the graph as you can see in the image above.

- Here we are first creating our own data using sklearn.datasets.make_blobs and then we will fit KMeans on this data.

Do let me know if there’s any query regarding KMeans by contacting me on email or LinkedIn.

So this is all for this blog folks, thanks for reading it and I hope you are taking something with you after reading this and till the next time ?…

READ MY PREVIOUS BLOG: DECISION TREES – LAST-MINUTE NOTES

Check out my other machine learning projects, deep learning projects, computer vision projects, NLP projects, Flask projects at machinelearningprojects.net.